This post is based on exercises published by Cyrille Rossant in his book “Learning IPython for Interactive Computing and Data Visualization”. Cyril also has a blog well worth looking at: http://cyrille.rossant.net/blog/ (Thanks to Alfred Vachris and Boris Vishnevsky for the links).

As has been noted here before, pure Python can be very slow when looping through large blocks of data, but there are simple ways to speed things up dramatically. This is illustrated by alternative techniques to search a list of 10 million 2D coordinates to find the point closest to a specified location.

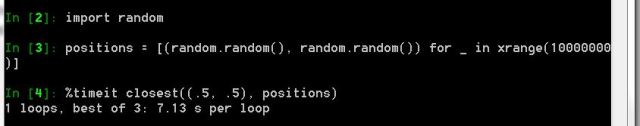

The screenshot below shows Python code to loop through the coordinates in the list “positions” and return the index of the one closest to “position”.

The positions list is generated with the random function, and the closest function is then run with a position of (.5, .5), as shown below:

The Python routine takes 7.1 s to loop through the 10 million rows of the positions list.

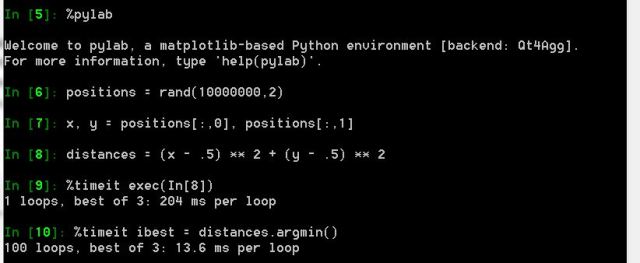

To speed things up, using Numpy, the procedure is:

- Import pylab with the command %pylab, which is the most convenient way of using NumPy in an IPython interactive session.

- Generate the positions array with the rand function.

- Extract single column arrays, x and y, from the positions array.

- Create the distances array with the command:

distances = (x – .5)**2 + (y-.5)**2 - Extract the minimum distance from the distance array with:

ibest = distances.argmin()

The total time to execute this procedure on the 10 million rows of the positions array was 218 ms, better than 30 times faster than the original code.

The message is, whenever working with large arrays, use Numpy functions that operate on the entire array with efficient C based code, rather than looping through the arrays with interpreted Python code.