Following the previous post, which looked at the way different data types worked when transferring data between Excel and Python, this post looks at ways t0 get the best performance.

As a benchmark I have used a short routine that:

- Reads a range of 3 columns x 1,048,570 rows from Excel (this is 6 less than the maximum rows in a spreadsheet, in Excel 2007 and later).

- Sums the contents in each row and saves this data in a single column array

- Writes the sum of rows array back to Excel to a range 3 columns wide (generating 3 identical copies of the array).

Benchmark results were checked for the following combinations:

- 5 different combinations of array type (var, numpy_array, and float[]) to pass the data between Excel and Python.

- As 1, but using the Numba compiler.

- As 2, but looping the Sumrows routine 100 times

The data in the source array consists of numbers and blank cells, but no text.

For the first series of runs the data was read from Excel to a variant array in VBA, then passed to Python via Pyxll to sum the columns. The resulting array was then returned to VBA and written back to the spreadsheet. Typical VBA code is shown below:

Sub Timepysub()

Dim Func As String, InRName As String, InRange As Range, OutRange As String, Out As Long, TRange As String

Dim timenow As Double, timea(1 To 1, 1 To 4) As Double, RtnA As Variant

timenow = Timer

Func = Range("Func").Value

InRName = Range("in_range").Value

OutRange = Range("Out_Range").Value

TRange = Range("trange").Value

Set InRange = Range(InRName)

Out = Range("out").Value

RtnA = Application.Run(Func, InRange, Out)

timea(1, 1) = RtnA(1, 1)

timea(1, 2) = RtnA(2, 1)

timea(1, 3) = Timer - timenow

Range(OutRange).Value = RtnA

timea(1, 4) = Timer - timenow

Set InRange = Nothing

If Out >= 2 Then

Range(TRange).Value = timea

End If

End Sub

Note that the data range is declared as a range (rather than a variant, as I would normally do when working entirely in VBA). This is necessary to allow the full array of 1 million+ rows to be passed to Python, using “Application.Run”.

The results with the different options are shown in the screen shot below:

It can be seen that:

- In the first series, the fastest results were obtained using a var array for both input and output.

- The Sumrows time was significantly faster using a numpy_array, but transfer times were much longer.

- Using the Numba compiler significantly reduced the execution time for the Sumrows function in all cases, but the effect was very much greater when working with numpy_arrays, where the time was reduced by a factor of the order of 400!

- The much greater effect of Numba when working with numpy arrays was confirmed by looping through the Sumrows function 100 times. For this case the total execution time for the numpy arrays was more than 6 times faster than float arrays, and the execution of the Sumrows function was over 60 times faster.

The results when reading and writing from/to the spreadsheet directly from Python are shown below:

The execution times for this case are significantly slower than reading and writing from VBA because:

- The time to transfer the data is of the order of 2-3 slower than working in VBA.

- When using numpy arrays the blank cells are read as either ‘NoneType’ or as ‘numpy.float64’ with a value of ‘nan’ (not a number). This results in rows with blank cells returning either an error or an incorrect value, so in the Sumrows function it is necessary to check for the blank cells. This greatly slows down the performance of the function, in the case of the runs compiled with Numba the execution time being increased by a factor of over 100!

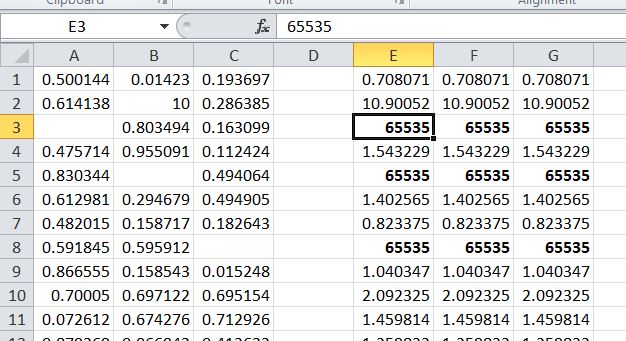

The results of using numpy arrays with dtype = np.float64 and not checking for ‘nan’ are shown in the screen shot below, where any row containing a blank returns 65535, rather than the sum of the two non-blank cells. Note however that if the data set contains no blanks there is a huge improvement in execution time by not checking for ‘nan’, especially when using the Numba compiler.

In summary:

- When transferring large amounts of data, and where use of VBA is acceptable, read and write the data in VBA and pass it to Python using either Pyxll float[] or numpy_array data types.

- If significant numerical processing is to be carried out in Python there can be a huge speed improvement by using the Numba compiler in conjunction with numpy_array.

- If the numerical processing is limited the float[] data type may be significantly faster.

- If Numba is not used then the Pyxll var data type may be the fastest (but only marginally faster than float[]).

- If it is necessary to read and/or write from Python, and the data may contain blanks, either read the data to a Python List of Lists, or use a np.array and clean the data (by checking for values that are not of type “float”) before carrying out any numerical processing.

- If it is certain that there are no blank cells then read the data to a numpy array using dtype = np.float64, and use the Numba compiler.

I know this is an old post, but just to let you know – passing floating point numpy arrays in PyXLL 4 is now significantly faster. If you need to pass a lot of data between Excel and Python or vice versa using “numpy_array” or “numpy_array” is now the fastest way (faster than var).

LikeLike